Hello Everyone !

"Salesforce Apex Hours" is a recurring event to talk about salesforce ! Some times we'd like to meet on one location and some time online. We are happy that we completed 7 successful Online event in 2017 on below topic

1) MicroServices.

2) Einstein Intent

3) Winter 18

4) Salesforce DX

5) Hyper Batch Job

6) Lightning Component Framwork

7) Live Agent

I want to say thanks to our speaker to make this Online event successful. If you missed above event still you check our online video and PPT. Here is Summery of all Event

1) MicroServices

In that session Don Robins took the session on "Mitigate with Mono-Purpose Microservices".

The flexibility built into the Salesforce platform allows developers to build incredible enterprise and mobile applications using a No Code, Low Code or High Code approach. Developers can build most of their apps with declarative tools, and code their way to success with Apex, Visualforce and Lightning Components when needed. But what’s a developer to do when the platform tools just don’t let them do what they need? In this presentation we’ll explore how to mitigate such requirements by integrating Salesforce with external Heroku micro-services to provide extended solutions that typically can't be met with Force.com capabilities.

Agenda :-

- Microservices –WHAT, WHY, HOW

- My Microservice – PDF Parser a practical mitigation use case

- Sample Microservice demo and code walk thru

- Take-aways and Links

2) Einstein Intent

In that session Daniel Peter (Salesforce MVP) took the session on "Einstein Intent".

Agenda :-

- Introduction to Einstein Intent

- Demo

- FAQ

3) Winter 18

In that session Jitendra Zaa (Salesforce MVP) took the session on "Winter 18 for Developer".

Agenda :-

- What’s new in Winter 18 for Developer

- Enhancements in Flow

- Other platform improvements

4) Salesforce DX

In that session Jitendra Zaa (Salesforce MVP) took the session on "Salesforce DX".

Salesforce DX provides you with an integrated, end-to-end lifecycle designed for high-performance agile development. In this session we would go through hands on and see how Salesforce DX can be used to create scratch org, automated testing and data load purpose. We would discuss CLI option as well Force.com IDE

Agenda :-

- Introduction to Salesforce DX

- Creating Scratch Org

- Deploying metadata to Scratch Org

- Creating Skeleton Workspace

- Running Test classes

- Getting Help

- Using Force.com IDE with Salesforce DX

- Q&A

5) Hyper Batch Job

In that session Daniel Peter (Salesforce MVP) took the session on "Hyper Batch Job".

Agenda :-

- Whats is HyperBatch

- Difference between Old Traditional Apex Batch job and HyperBatch

- Demo

- FAQ

6) Lightning Component Framwork

In that session "Mohith Shrivastava" took the session on "Lightning Component Framework".

Agenda :-

- Introduction To Lightning Components

- Difference between Lightning Components and Visualforce

- Thinking in terms of Component Model

- Basics of Lightning - Inside Bundle

- Events - Discuss Application and Component Events

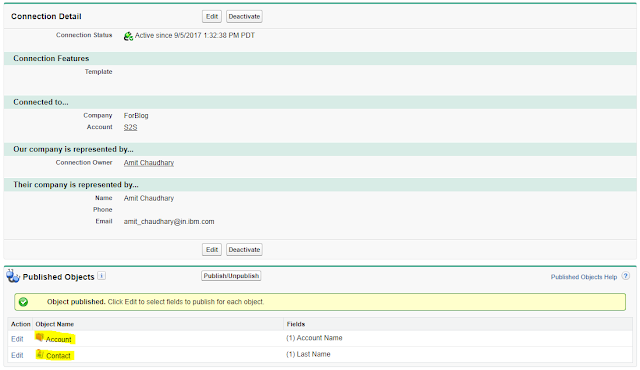

7) Live Agent

In that session Amit Chaudhary took the session on "Live Agent".

Agenda of Session was :-

- What is Live Agent

- Pre and Post Chat Form

- Demo

- FAQ

Please follow us on below pages/link for future session :-

Facebook Page :- https://www.facebook.com/FarmingtonHillsSfdcdug/

Meetup Link :- http://www.meetup.com/Farmington-Hills-Salesforce-Developer-Meetup/

Twitter Tag :- #FarmingtonHillsSFDCdug #SalesforceApexHours

Meetup Link :- http://www.meetup.com/Farmington-Hills-Salesforce-Developer-Meetup/

Twitter Tag :- #FarmingtonHillsSFDCdug #SalesforceApexHours

YouTube :- https://www.youtube.com/channel/UChTdRj6YfwqhR_WEFepkcJw/videos

Please email me if you want to become a speaker in our Online event.

Thanks,

Amit Chaudhary

@amit_sfdc

amit.salesforce21@gmail.comPlease email me if you want to become a speaker in our Online event.

Thanks,

Amit Chaudhary

@amit_sfdc